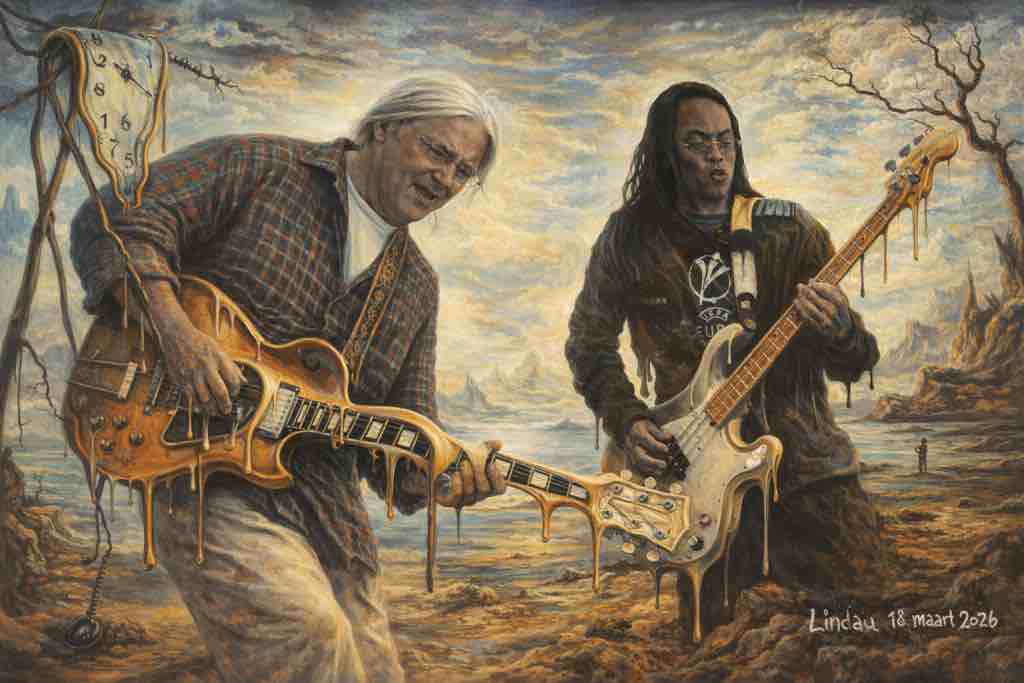

Rotterdam – You can feel it in the pace, in the way the first experiments stop being “testing” and start turning into routine craft. This is the next phase, built on experience: time spent learning AI tools the way you’d learn a new camera, a new pedal, a new workflow—by doing, by failing, by correcting, by doing again. The conversation was clear about the arc: AI experiences, Copilot and ChatGPT, and then the craft itself changes. The artworks keep getting better. Not magically—incrementally, step by step—because the creator is also evolving while the tools evolve.

In the early days, the learning curve was practical: practicing with Facebook photos, collecting faces, trying variations, feeding the machine until the machine stopped feeling like a foreign body and started feeling like an instrument. That matters, because generative systems often make people think they can skip the fundamentals. But the real progress came from leaving plenty of creative room to the computer while still holding onto taste, restraint, and intent. The result wasn’t just “more art.” It was a new kind of collaboration—part human judgement, part AI speed, part automated exploration.

This is where Rotterdam’s energy seeps through the floorboards. Not in the form of slogans, but in the texture: the workshop mood, the warehouse rhythm. AI iteration resembles logistics—repeat, measure, adjust, ship. In a port city, you don’t confuse a blueprint with the dockside reality. You build, you move, you refine. And in this case, the refinement is aesthetic. The machine helps accelerate the cycle of drafts, style experiments, and face refinements, until the output looks like something that could belong in a gallery rather than a sandbox.

The creator is also explicit about an important detail: they are an amateur, and AI “strengthens everything,” as if it nurtured the journey. That phrasing matters sociologically, because it suggests a shift in how creative identity works. Amateur no longer means powerless. Amateur can mean flexible, hungry, iterative, and—when paired with tools—capable of producing work with a level of polish that once required years of professional pipeline knowledge.

On the surface, it’s about images. Underneath, it’s about capability and empowerment: who gets to make, who can afford to try, and who can reach a higher finish without burning out on technique alone. And those questions don’t stay inside art circles. They spill outward into labor markets, media ecosystems, and even the trust people place in what they see.

AI’s growing role also shows up in the creator’s approach to legacy. Old works don’t get abandoned; they get revisited. The conversation described taking earlier personal pieces and editing them into the styles of famous artists. That’s not only a creative exercise—it’s a form of retroactive learning. When you apply a style transfer, you’re forced to confront composition, contrast, texture, and mood in a more structured way. You notice what the “old you” did, what the “style lens” demands, and what becomes clearer when the machine magnifies the grammar of visuals.

From that perspective, the “next phase” is not a marketing term. It’s a training loop. The creator is not just generating outputs; they are learning to direct a system.

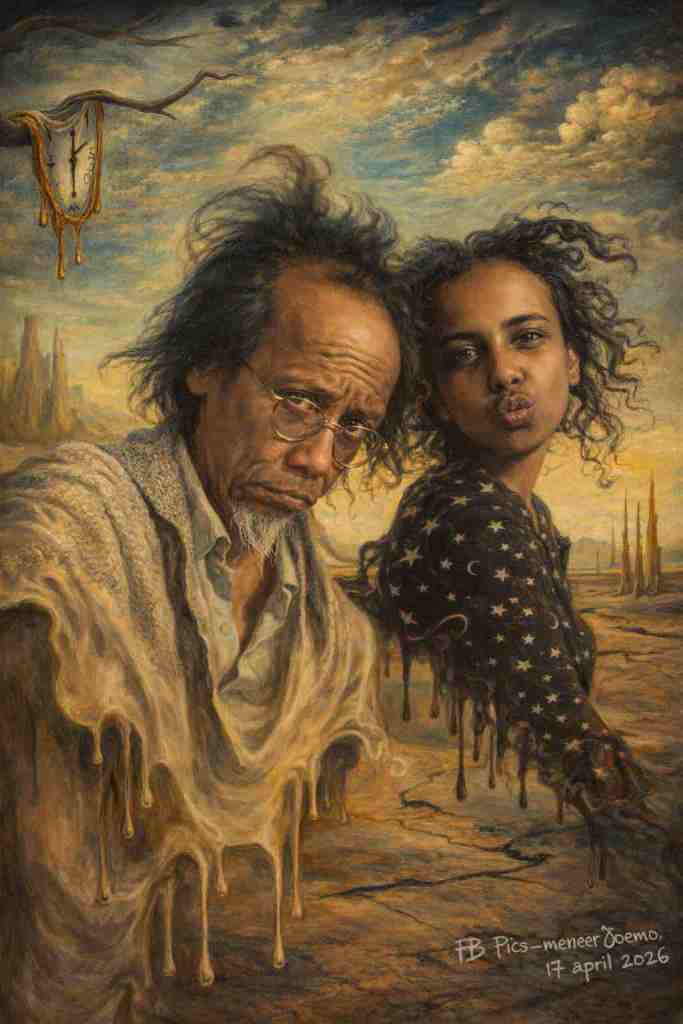

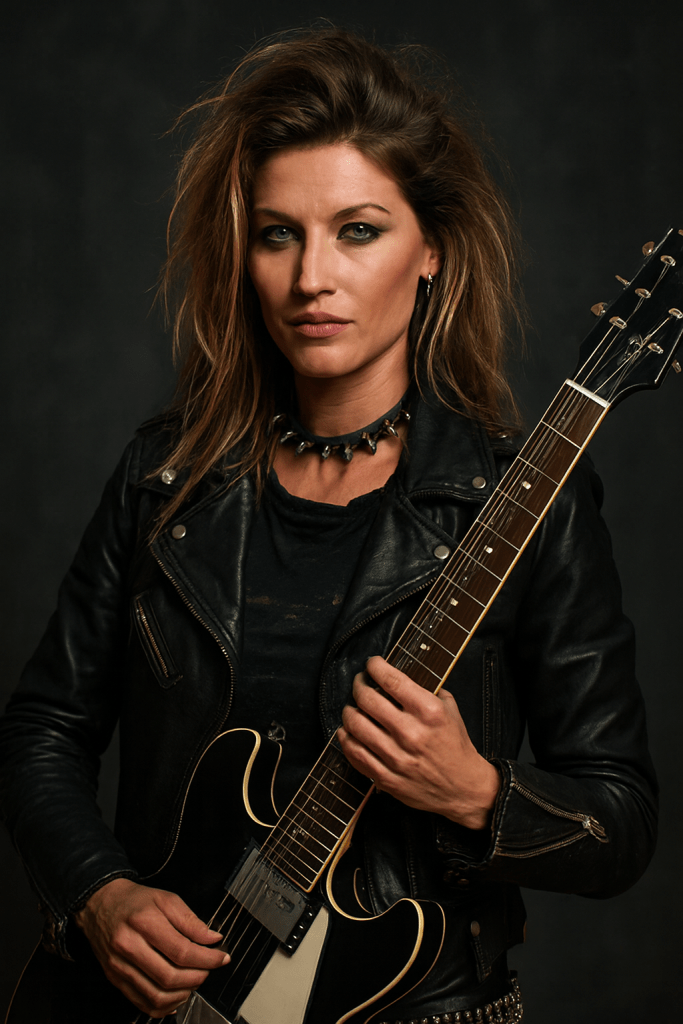

Bringing Two Characters to Life: Belle and Alie Under a Fixed Face, With More to Come

The most concrete artistic development described was about characters: Belle and Alie. The ambition wasn’t vague. It involved bringing both characters to life with a stable facial identity—“a fixed face”—so that the characters feel consistent across iterations. That’s a practical challenge in generative art, and it connects directly to credibility. Viewers don’t just respond to beauty; they respond to coherence. A character that changes face every time reads like noise, not storytelling.

Here, AI becomes a tool for character continuity. The creator isn’t chasing one-off visuals; they want the characters to remain recognizable. That means the process likely includes careful prompting, consistent reference usage, and iterative selection—choosing outputs that keep the identity intact. The conversation suggested that the machine is still in development and that the creator is developing too. That dual growth is what turns an experiment into an ongoing series.

This is where the Rotterdam street rhythm matters again: continuity is what keeps a neighborhood coherent. In the port and in the districts around it, you recognize addresses by pattern—buildings, logistics flows, wind direction off the water. You learn to read structure. The same logic applies to character art: you don’t build a series on random faces; you build it on recognizable identity.

The narrative energy of the conversation made it clear that the creator is not only improving technical outputs, but expanding the world around the characters. “And even more” was part of the description, meaning the next step isn’t simply enhancing images—it’s expanding the cast, or deepening the series. That suggests a shift from experimentation to production mindset.

There’s also a broader cultural angle. When people train AI with stable character identities, they’re moving toward a future where personal universes can be built faster—where creators can develop a visual language at a higher volume. That could mean more art, but it also means more pressure. When outputs multiply, attention becomes the bottleneck. In a city like Rotterdam, where commerce and logistics are always competing for flow, you understand attention as a kind of cargo. More shipments can flood the harbor unless the system organizes itself.

So the “Belle and Alie” development isn’t merely aesthetic. It signals a move toward series-based creation, which can influence how online audiences consume art—favoring recognizable brands of visuals, even if they’re AI-assisted.

And with AI, “brand” can be a double-edged sword. Consistency attracts audiences, but it can also amplify the reach of misleading imagery if identity and provenance are weak. That’s why the next part—the political angle—could never stay purely playful.

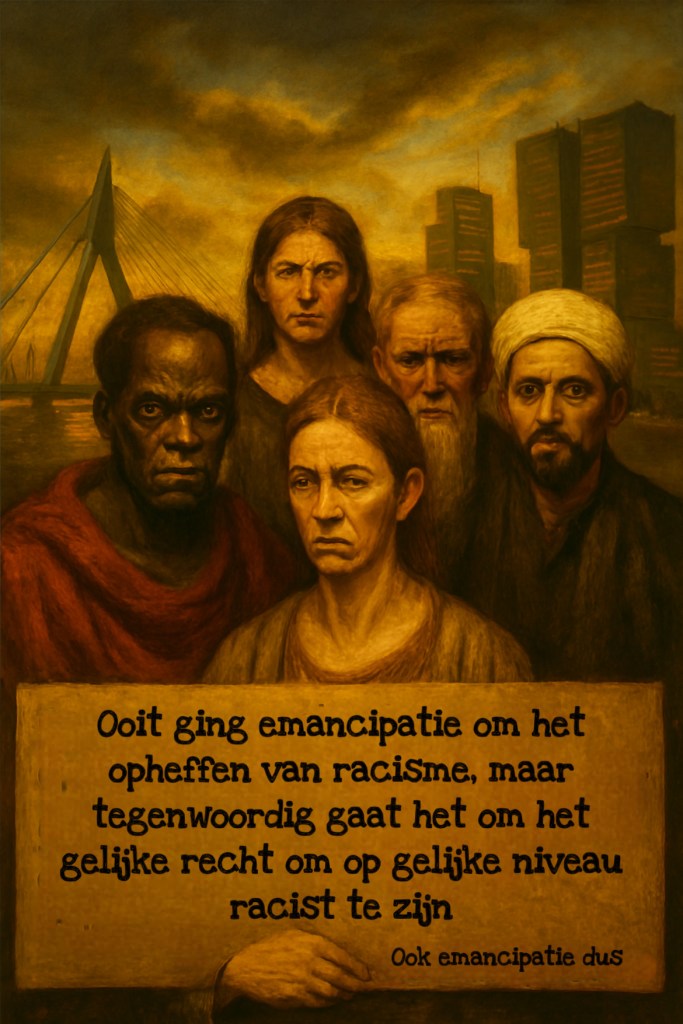

The Political Bee: When AI Meets Memes, Faces, and Public Trust

The conversation then pivoted to politics, and the metaphor was striking: a political “beestje”—a little beast that feeds on attention and provokes reaction. The creator described using AI for political opportunities: generating or editing images with known political figures, turning memes into something sharper, more shareable, and visually more convincing. The move is from ordinary Facebook-based tinkering to a “next phase” with stronger tooling and new outputs.

In other words: the same AI competence used for artistic refinement is also being used to intensify political content.

This is where the journalistic lens has to land on consequences. Memes are often treated as trivial, but they work as public messaging systems. If AI improves the look and realism of political imagery, it can compress the time between idea and impact. A meme isn’t just a joke; it can function as a propaganda micro-unit—small, fast, emotionally loaded, designed for repetition. When the visuals become stronger, the emotional stickiness can rise too.

The conversation mentioned “editing Facebook memes,” which is a critical clue. Facebook remains a major vector for image-based persuasion in everyday life. When AI boosts the output quality, it can increase the likelihood that people perceive the content as credible—even when it isn’t. That doesn’t mean every piece is false; it means the barrier to deception lowers.

Now connect that to tangible societal stakes, because it’s not only about abstract “information quality.” It affects:

- Safety: misleading images can inflame harassment, misdirect anger, or accelerate social conflict.

- Trust: when people can’t reliably judge authenticity, social friction rises.

- Time cost: everyone spends more effort checking what they see.

- Money and politics: trust erosion can translate into polarization, which eventually affects policy direction and institutional stability.

And in Rotterdam terms, institutions are like infrastructure. When the system’s reliability drops, you don’t just get slower transport—you get expensive detours. In the world of everyday life, those detours show up as stress, higher personal risk, and more social fragmentation.

There’s also an economic layer. AI-assisted meme production can change labor patterns. Some people may find it easier to create mass content without traditional media skills. That can displace certain roles while creating new “prompting/editing/curation” tasks. But in the short term, it often favors whoever can iterate fastest, which is frequently the side with more time, devices, and technical comfort.

And yes, the port image returns: faster content is faster “throughput.” But throughput without quality checks can clog the harbor.

Practice on the Timeline: Training, Iteration, and the Feeling of AI “Rijpt”

A key part of the conversation wasn’t only what the creator built, but how the creator experienced building. The shift described was from “lots of creativity left to the computer” toward a more directed mode—because experience accumulates. The phrase “as if he matured me” captures something many users notice: tools don’t just generate images; they teach the user through feedback loops.

You can see it in the step progression:

- Use the tools for practice (Facebook photos).

- Let the system explore (creativity shared with the computer).

- Refine outputs until the aesthetic improves.

- Apply new consistency constraints (fixed character faces).

- Expand scope (old works stylized by famous artists).

- Then broaden the application area into political meme editing.

That progression resembles a pilot program—except it’s personal. The creator is treating AI like an apprenticeship system, where the “mentor” is the tool’s generative responses and the “student” is the user’s improving judgement.

In a societal context, that kind of maturation has implications. If more people experience success early, adoption rises. If adoption rises, the volume of AI-generated images in everyday spaces like social networks increases. That can lead to more confusion unless media literacy and verification mechanisms keep up.

And verification has a cost. Not necessarily money in every case, but time and attention—energy in the mental sense. In a country where energy prices and household budgeting are already under pressure, the additional cognitive overhead becomes part of the everyday equation.

Even if the viewer only spends a second or two assessing a suspicious image, multiplied by millions of views, that’s a measurable drag. It’s similar to how Rotterdam deals with congestion: small delays add up when thousands of trucks hit the same corridor.

So when the conversation ends with “we go to a next phase,” it’s more than personal ambition. It’s a window into a broader pattern: AI competence moves from niche to normalized routine, and normalization affects public information flows.

Real-World Impacts: Creative Empowerment Meets Job, Energy, Safety, and the Port’s Attention Economy

The heart of the conversation is creative development—but the societal analysis has to sit underneath it like concrete under a street. Generative AI doesn’t exist in a vacuum. It touches:

1) Your pocketbook and the energy price logic

AI tools require computation. Even if individual users pay in subscription models or indirectly through platform costs, the broader economy absorbs energy and infrastructure expenses. As energy prices rise or fluctuate, the cost structure of large-scale computation pressures services, platforms, and adoption rates. When demand increases—because more people create and share—platforms may adjust pricing or usage limits.

For creators, that can shift who can afford experimentation. The conversation’s “amateur but AI strengthened everything” points to democratization. But democratization can be partial if tool access becomes more expensive.

2) Jobs and skills

AI-assisted image creation and editing can reduce demand for certain low-level creative tasks while increasing demand for new workflows: concept direction, prompt strategy, selection/curation, and compliance awareness. In labor terms, it’s not just “jobs lost or gained.” It’s job transformation.

People who can’t adapt might lose opportunities. People who can adapt become faster competitors. That dynamic can increase insecurity, even as it increases output and creativity.

3) Safety and authenticity

Political memes are particularly sensitive. When AI improves the aesthetics and plausibility of political imagery, it can amplify misinformation risks and intensify online conflict. The public feels these effects as harassment spikes, reputational attacks, and social polarization.

4) Public trust and institutional friction

When authenticity becomes ambiguous, people demand more evidence. Evidence gathering is slow; it’s expensive; it’s exhausting. That creates friction between citizens and institutions, and it can affect civic stability.

5) The port’s attention economy: trade meets content

Rotterdam is built on logistics—on moving goods through constrained channels. Social media is also a logistics system: content moves through feeds under constraints of attention, engagement metrics, and platform visibility. AI increases the throughput of content creation. If the “harbor authority” (moderation, labeling, verification) doesn’t scale at the same speed, the system fills with low-quality or deceptive cargo.

That’s the tangible consequence layer: not just moral panic, but practical congestion of trust.

So, the “next phase” described in the conversation—art, character continuity, style transfer, and political meme editing—should be understood as part of a broader infrastructure shift. Creativity and politics are sharing the same engine now. The engine is generative AI. The question is whether society is building enough safeguards, enough verification habits, and enough institutional capacity to prevent that engine from becoming a chaos machine.

A Bridge Between Art and Politics: Why “Pics & Kieks” Signals More Than Play

The closing phrase—“Volg Pics & Kieks”—reads like a personal branding signal, a way to mark a channel of updates. But in context, it also signals a shift from isolated creation to public-facing output: more sharing, more iteration, more visibility. That’s where the creator’s artistic world and political meme world could start overlapping in audience perception.

When the same creator (or the same tool ecosystem) can generate both polished characters and persuasive political imagery, the audience may struggle to separate entertainment from manipulation—especially if visuals carry an aura of credibility simply because they look well-made.

This is not a claim that all AI political imagery is deceptive. It’s a claim about perception. Visual fluency can mislead, even when the content is intended as humor.

Rotterdam’s street energy frames this well: in a busy district, the line between legit commerce and shady dealing depends not only on intent, but on clarity of signals. If signals blur, people hesitate, prices rise, and safety becomes a concern. In online spaces, blurring signals produces similar consequences: hesitation, distrust, and friction.

So the next phase isn’t only “more art.” It’s a broader media reality where the aesthetics of AI become a multiplier. The creator’s workflow is a case study: practice with Copilot and ChatGPT, consistent character faces, style transfer from famous artists, and then meme-level political experimentation. All of it demonstrates how fast the creative engine is moving.

And the port city perspective—harbor, wind, Maas, industry, logistics—keeps the analysis grounded. In Rotterdam you don’t pretend systems are infinite. You measure flows. You manage risk. You watch the weather and the tides. You build for reliability.

That’s what this conversation ultimately points toward: AI creation is accelerating, but the world receiving those creations—art platforms, social feeds, political discourse—has to adapt too, or the consequences will show up in pockets, jobs, safety, and trust.

Leave a comment